Risk Based CSV Validation Approach for AI Enabled Computerised Systems in GxP Environments

AI-driven computerised systems are rapidly entering GxP-regulated environments (QC labs, manufacturing, supply chain and more). As they do, traditional computer system validation approaches and legacy Computerised Software Validation (CSV) methods are struggling to keep pace. This is why the FDA promotes the shift towards computer software assurance (CSA), which offers a more flexible, risk-based, and efficiency-focused pathway forward for modern validation. Understanding CSA vs CSV is key to implementing risk-based validation

Why does AI require a different Validation Approach?

Traditional computer system validation methods assume systems are static, rules-based, and deterministic. This makes validation predictable and documentation-heavy. However, AI systems—particularly machine learning (ML)—are:

- Probabilistic (produce likelihood-based outputs)

- Adaptive (may evolve over time)

- Data-dependent (performance depends on training & validation data)

By implementing a computer software assurance (CSA) approach, the validation focus shifts from:

“Did we test every requirement?”

to

“Is the system fit for its intended use? Is it controlled and trustworthy throughout its lifecycle?”

This aligns closely with GxP data integrity requirements, ensuring that patient safety, product quality, and data integrity remain central.

Risk-Based Thinking in AI Validation

Within modern computer system validation, validation effort should scale with risk. The CSA approach emphasises critical thinking, especially as AI introduces new risk vectors:

- Model errors

- Incorrect or insufficient training data

- Model drift over time

- Lack of transparency

- Incorrect integration with regulated workflows

These risks are particularly relevant in the CSV services pharmaceutical industry, where AI adoption is accelerating.

High vs Low Risk AI Use Cases

| High-Risk

|

AI-driven QC analysis |

| Automated batch release | |

| Predictive maintenance that could impact GMP manufacturing | |

| Low-Risk

|

AI used for scheduling (Resource estimation) |

| Action items for follow-up | |

| Documentation of automation tools |

What activities are considered high-risk in AI validation?

High-risk scenarios in GAMP 5 software validation frameworks include AI use in:

- Pre-determined process parameter testing

- Predictive control models adjusting bioreactor conditions

- AI supporting GxP data integrity requirements or audit trail verification

Example:

Using AI in a QC lab to analyse HPLC chromatograms to automatically identify:

- Purity

- Retention time deviations

- Unknown peaks

- Assay acceptance (Pass/Fail)

This directly impacts:

- Batch release decisions

- Risk of false acceptance of OOS products

- Undetected model drift without oversight

This level of risk requires strict alignment with 21 CFR Part 11 compliance and robust validation controls.

AI-Specific Controls in a CSA Framework

Because AI performance depends heavily on data, validation must incorporate strong governance across the lifecycle—an essential evolution of computer system validation.

AI validation should include three key domains:

These controls ensure compliance with GxP data integrity requirements, aligning with GAMP 5 software validation principles and supporting a robust approach to data integrity across GxP systems.

What are the benefits of a CSA AI-approach?

Adopting computer software assurance (CSA) within AI-driven computer system validation delivers:

- More efficient validation (less documentation, higher value)

- Stronger focus on traceability and transparency

- Improved audit readiness and 21 CFR Part 11 compliance

- Faster deployment of innovative AI solutions

- Clear lifecycle governance for models and data

This approach modernises validation practices within the CSV services pharmaceutical industry, enabling teams to move beyond rigid, checklist-driven validation.

Can AI-driven validation ever be fully autonomous in GxP environments?

Currently, regulators require a human-in-the-loop for GxP-critical AI systems.

Authorities including FDA, EMA, MHRA, and GAMP 5 software validation guidance emphasise:

- AI cannot autonomously make GxP decisions

- Human oversight, interpretation, and confirmation are essential

- Accountability must remain with the organisation—not the algorithm

Key validation questions include:

- Is the AI output correct?

- Does it make scientific and GMP sense?

- Does it align with risk levels and regulatory expectations?

Even in lower-risk applications, periodic review is required to maintain compliance with 21 CFR Part 11 compliance and GxP data integrity requirements.

As regulatory frameworks continue to evolve, emerging guidance such as Annex 22 under the EU AI Act is beginning to formalise expectations for AI validation, including lifecycle control and continuous monitoring.

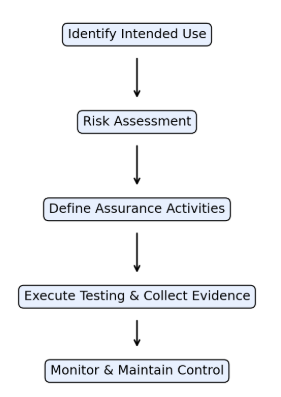

CSA workflow for AI-enabled computerised systems

The Future of AI in Computer System Validation

AI is transforming how organisations approach computer system validation, but it also introduces new complexities that traditional CSV cannot fully address. By adopting computer software assurance (CSA) principles, companies can implement a scalable, risk-based validation strategy that supports innovation while maintaining compliance.

Struggling to apply CSA principles to AI-enabled systems?

Our team specialises in CSV services for the pharmaceutical industry, helping organisations implement scalable, risk-based validation aligned with GAMP 5 software validation and regulatory expectations.

Book a consultation to assess your current validation approach

📞 Phone: 051 878 555

📧 Email: team@dataworks.ie

🌐 Website: www.dataworks.ie