Annex 22 EU AI Act – Impact on Software Validation and Data Integrity in Pharma

What is Annex 22 and why is it important?

Annex 22, which forms part of the EU AI Act, effectively serves as the ‘new rulebook’ for Artificial Intelligence (AI) in pharmaceutical manufacturing regulation. This blog aims to align global regulators — including the FDA, EMA, PIC/S, and MHRA — and to reinforce control and traceability in the use of AI tools within pharmaceutical manufacturing.

Annex 22 remains in draft form for now, but it is anticipated to become enforceable within the EU GMP framework. When implemented, it will set out clear guidelines for how AI must be designed, validated, and controlled in GxP-regulated pharmaceutical and biopharmaceutical environments. This has direct implications for computer system validation approaches and the evolution of validation practices across the industry.

European Commission Directorate-General for Health and Food Safety (DG SANTE) & PIC/S (Pharmaceutical Inspection Co-operation Scheme). “Annex 22: Artificial Intelligence” — EU GMP Guidelines, Volume 4. Released for public consultation: 7 July 2025 – 7 October 2025.

The Regulatory Gap: How Annex 22 Addresses AI Risks

AI introduces risks that traditional Annex 11 (Computerised Systems) cannot fully address, although it still provides foundational guidance. This draft gives insight into the regulatory expectations that will be introduced around AI use and signals that AI is here to stay. Regulators are clear: it can be used, but it must be transparent, traceable, and well-controlled.

The goals of Annex 22 are to ensure AI is used safely in manufacturing, is explainable and controlled, and protects product quality, patient safety, and GxP data integrity requirements. It also provides practical, operational requirements for AI models.

Annex 22 does not stand alone. It operates alongside the EU AI Act (2024/1689) and Annex 11, forming a unified framework that governs how AI must be designed, validated, and operated in regulated pharmaceutical environments. This positioning gives Annex 22 significant implications for GxP systems, automated decision-making, and data-driven processes, particularly in the context of GAMP 5 software validation and modern compliance expectations.

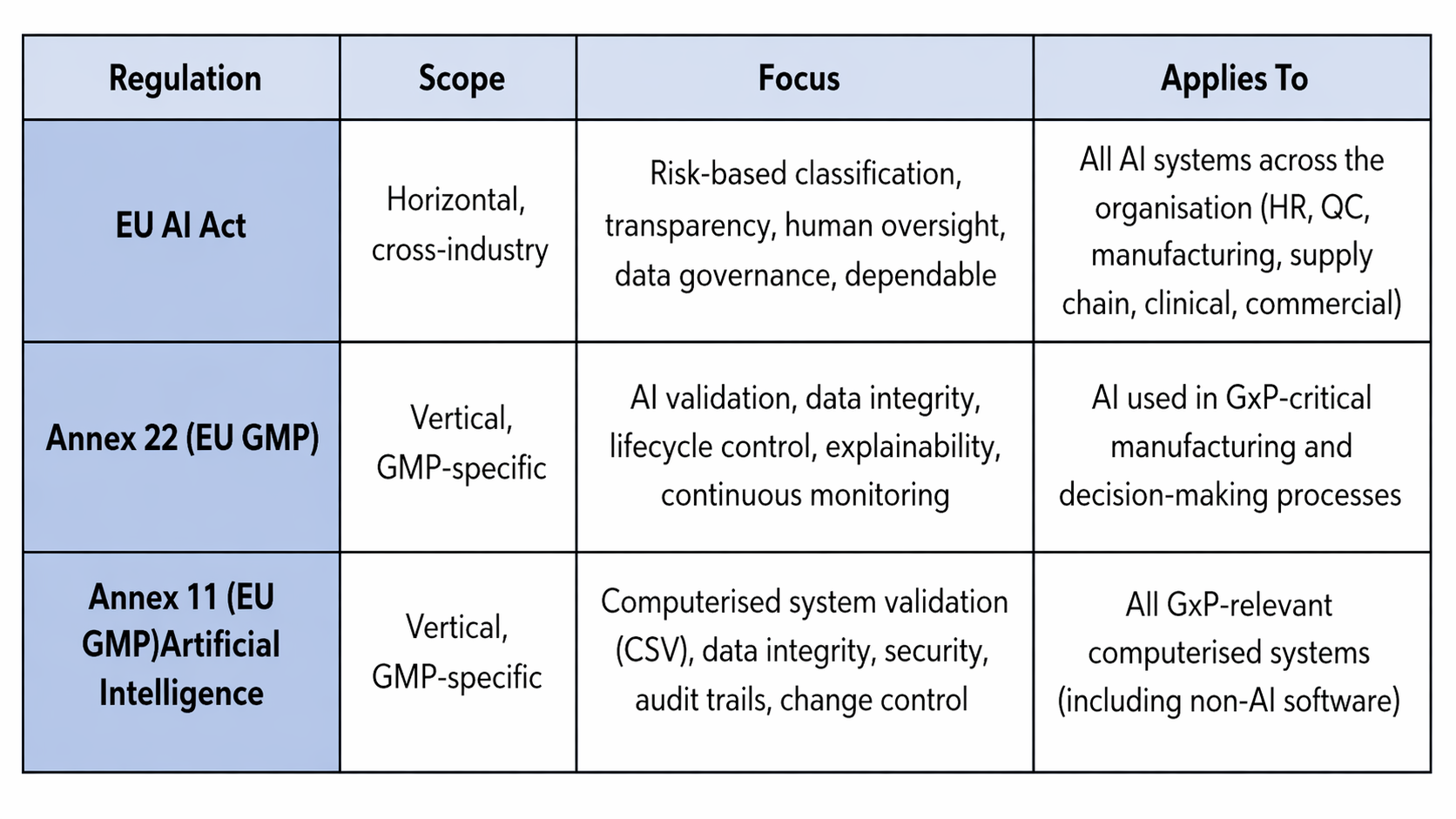

EU AI Act vs Annex 11 & Annex 22: How they differ and why pharma needs all three:

Impact on CSA/CSV: How validation must evolve with changes

The shift from CSA (Computer Software Assurance)/CSV (Computer System Validation) approaches to AI requires moving from deterministic systems to probabilistic ones.. The current “Expected result” testing will become insufficient. Validation of AI must include model behaviours (How AI is expected to act under normal conditions), drift (A gradual change in a model’s performance), and performance boundaries (Defined limits which a model is considered safe, reliable and acceptable). The current draft of Annex 22 places strong emphasis on clearly defining how an AI model is intended to be used — including the type of data it relies on, the outputs it generates, and the level of risk it introduces to the process. This level of clarity is essential because it creates a traceable record quality to validate and rely on when assessing whether the system’s decisions are appropriate and trustworthy.

The introduction of Annex 22 will reinforce continuous performance monitoring, re-validation triggers based on model updates or data change but this will require documented an expert-guided evaluation. Maintaining alignment with CSA principles by emphasizing critical thinking and risk‑based testing.

EU AI Act vs Annex 11 & Annex 22: How they differ and why pharma needs all three

Annex 11 (EU GMP) continues to underpin traditional computer system validation (CSV) practices, including security, audit trails, and change control for all GxP-relevant computerised systems (including non-AI software). Together with Annex 22, it ensures that both conventional and AI-driven systems meet evolving regulatory expectations, including 21 CFR Part 11 compliance for electronic records and signatures.

Impact on CSA/CSV: How validation must evolve with changes

The shift from computer software assurance (CSA) and traditional computer system validation (CSV) approaches to AI requires moving from deterministic systems to probabilistic ones. The current “expected result” testing model will become insufficient.

Validation of AI must include:

- Model behaviours (how AI is expected to act under normal conditions)

- Drift (a gradual change in a model’s performance)

- Performance boundaries (defined limits within which a model is considered safe, reliable, and acceptable)

The current draft of Annex 22 places strong emphasis on clearly defining how an AI model is intended to be used — including the type of data it relies on, the outputs it generates, and the level of risk it introduces to the process. This level of clarity is essential because it creates a traceable record that supports robust computer system validation and enables organisations to assess whether system decisions are appropriate and trustworthy.

The introduction of Annex 22 will reinforce continuous performance monitoring and re-validation triggers based on model updates or data changes. This will require documented, expert-guided evaluation and alignment with CSA principles, emphasizing critical thinking and risk-based testing approaches increasingly adopted in CSV services within the pharmaceutical industry.

Data Integrity under Annex 22: New Expectations for AI-Driven Systems

What’s required from Annex 22 in relation to Data Integrity?

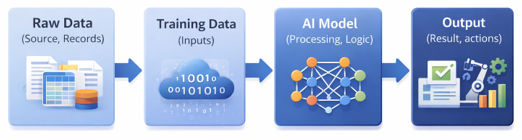

1. Data becomes a regulatory artefact

Organisations must demonstrate proven representativeness, completeness, and relevance of training data. This includes documented data lineage to provide a clear, auditable record of where the data originated, how it was processed by the AI system, and how it contributed to the final validated output—aligning closely with evolving GxP data integrity requirements.

In addition, the increasing use of SaaS-based AI tools introduces new regulatory considerations, as continuous vendor-driven updates can alter model behaviour and impact the validated state. Annex 22, as part of the EU AI Act, places clear expectations on lifecycle management, change control, and post-market monitoring.

2. Audit-ready data governance

Organisations must demonstrate full traceability across data, models, and outputs. This supports version control for datasets and models, as well as secure, compliant storage of training and test data—key expectations for maintaining 21 CFR Part 11 compliance in digital systems.

3. Strengthened ALCOA+ alignment

AI systems must support attributable model decisions with legible and explainable outputs and maintain original and accurate logs of model behaviour in cases such as data drift. Generative AI is permitted when it meets ALCOA+ expectations for attribution, explainability, and traceability.

Generative models that cannot provide audit logs, provenance, or explainable outputs fall outside acceptable use and should not be used in regulated or compliance-critical processes governed by computer system validation frameworks.